The Long Tail Problem.

This article was written without the use of AI.

By: Michael Patton, Chief Operating Officer and Co-Founder at MUDRICK & ASSOCIATES

“I really consider autonomous driving a solved problem. I think we are probably less than two years away from complete autonomy, safer than humans.” thats what Elon Musk said at the Code Conference in June of 2016. More than four years later, in late 2020, FSD Beta was launched, but it was nowhere near actual autonomous “Full Self Driving” that didn’t stop Elon from saying “I am extremely confident of achieving full autonomy and releasing it to the Tesla customer base next year [2021]. But I think at least some jurisdictions are going to allow full self-driving next year.” Over the course of the next 6 years Tesla would continue to be very close to solving FSD and yet, not quite. A decade after their initial efforts began to solve this problem, they have amassed a very impressive >8 billion supervised FSD miles driven and do seem to actually be on the precipice of solving Autonomous Vehicles (AV) once and for all… but what does this tell us?

Today, we hear many AI founders calling for AGI imminently. In early 2025, Anthropic CEO Dario Amodei predicted powerful AI systems, what he called “a country of geniuses in a data center”, would emerge in late 2026 or early 2027. OpenAI CEO Sam Altman declared, “We are now confident we know how to build AGI.” Google DeepMind CEO Demis Hassabis said AGI was “probably three to five years away.” Even earlier, DeepMind co-founder and Chief AGI Scientist Shane Legg (the person who coined the term “AGI”) has maintained since ~2009 that we would see human level AGI around 2025.

What makes us think that this time will be any different?

To be clear, this article is not to bash Elon Musk, or any of these great innovators, it’s simply to put the reality of reality into perspective. Elon Musk has learned this lesson the hard way, he summed it up well: “Roughly 10 billion miles of training data is needed to achieve safe unsupervised self-driving. Reality has a super long tail of complexity.”

And in reference to NVIDIA’s announcement that they would begin pursuing AV software: “What they will find is that it’s easy to get to 99% and then super hard to solve the long tail of the distribution.”

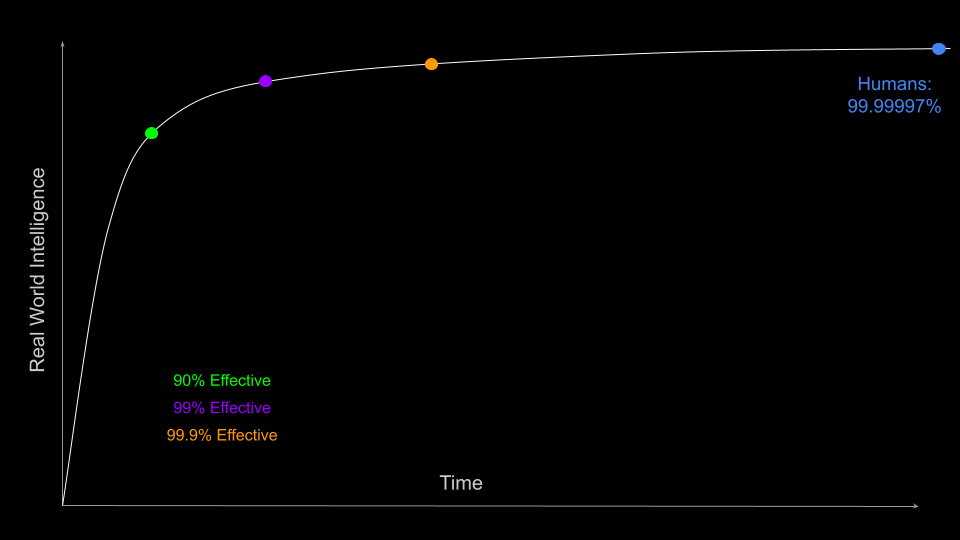

The reality is that humans are actually pretty good at doing stuff, we avoid serious crashes not just 99% of the time, or even 99.9% of the time. Humans avoid serious crashes 99.99997% of the time that we drive.

That last 0.00007% along with all of those trailing 9s (the edge cases - the mattress in the snowstorm, the construction zone with missing signs, the child chasing a ball at dusk) is the long tail. It is brutally expensive in miles, time, and engineering effort to close.

And it is exactly the same problem we see when companies try to deploy “autonomous” AI agents in the real world of business.

AI agents are incredible at the first 90%-99%. They can process invoices, qualify leads, reconcile accounts, draft reports, or handle customer queries at superhuman speed. But the moment the data is slightly off, a business rule changes, a rare exception appears, or context shifts, the agent hallucinates, compounds errors, or simply stops altogether.

How close are we to actual AGI? I’m not sure. A lot of AI “thought leaders” are confident that it could arrive later this year (2026). But what I’m seeing in the real world tells a different story. Today’s AI systems still lack deep context, they don’t inherently understand a company’s unique culture, the nuanced ways decisions are actually made, why those decisions might drive value, or how they could demoralize an organization, put simply, they don’t understand how everything fits together in the real world. Every output needs to be verified and because AI is non-deterministic, asking the same question three times will produce three slightly different answers. In a multi step agent workflow, those small variations could compound into actual errors.

Even more fundamentally, AI is not motivated in the same way humans are. Humans are complex hylomorphic beings, meaning we are both body and soul, with intrinsic drives, values, and purposes shaped by lived experience. AI has none of that. It has no inner drive to “do the right thing” for the company or its people, it has no sense of shared loyalty built through years of context within a team; it simply follows statistical patterns and the guardrails built into it. That absence of genuine morality and motivation makes true autonomy that acts in the best interest of your company in real world business contexts, even harder to achieve.

Just like early FSD, today’s agents hit the easy 90-99% fast but then they slam into the long tail. The only proven way to climb from 99% toward the 99.99997% human level reliability that businesses actually require is continuous human-in-the-loop feedback.

What’s the solution? At fiduciaries.ai (MUDRICK & ASSOCIATES), we believe we are solving this the right way. Along with our process to gain understanding of our client’s “context layer” prior to building solutions, we build custom AI agents that are architected and engineered from day one with structured human oversight and a powerful, intuitive GUI that puts control directly into the hands of our clients. Their domain experts can very quickly and easily input and update business rules, apply custom filters, and even write or modify SQL logic (especially powerful for our SQL agents).

The human feedback loop is deliberate and ongoing. Our custom platform tracks performance metrics and captures structured thumbs up or thumbs down feedback on every interaction, prompting users for context when issues arise. All agent outputs are clearly attributed, and any underlying queries or rules are reviewable and editable by the client’s technical team in a secure, permissioned environment. Business rules and master prompts can be updated in real time. We collaborate regularly with each client’s tech team to review performance, discuss edge cases, and iterate all while each side handles its responsibilities under our Shared Responsibility Model.

Our Shared Responsibility Model explicitly delineates the division of accountability and gives our clients meaningful ownership to continuously improve the agents while we guarantee the underlying stability, security, and reliability of the entire system. True partnership is built in from the beginning.

This way our clients are not reliant on us to make every little change any time their business evolves; instead they are empowered to drive the refinements, close the long tail faster, and help the solutions to continue generating additional value year after year.

This combination of expert built agents, performance based economics, fiduciary alignment, client empowering tools, and a clearly defined Shared Responsibility Model, turn our agentic solutions into reliable, value added systems that scale with your business.

This is why MUDRICK & ASSOCIATES exists.

We don’t sell SaaS equivalents or “set it and forget it” AI pilot hype. We deliver AI solutions that are thoughtfully built with actual value add and people in mind. The long tail isn’t going anywhere, reality will continue to be the judge, but with the right AI partner and a business model that aligns incentives, this long tail problem can become your competitive advantage instead of your biggest roadblock.